During its big GPT-5 livestream on Thursday, OpenAI showed off a few charts that made the model seem quite impressive — but if you look closely, some graphs were a little bit off.

In one, ironically showing how well GPT-5 does in “deception evals across models,” the scale is all over the place. For “coding deception,” for example, the chart shown onstage says GPT-5 with thinking apparently gets a 50.0 percent deception rate, but that’s compared to OpenAI’s smaller 47.4 percent o3 score which somehow has a larger bar. OpenAI appears to have accurate numbers for this chart in its GPT-5 blog post, however, where GPT-5’s deception rate is labeled as 16.5 percent.

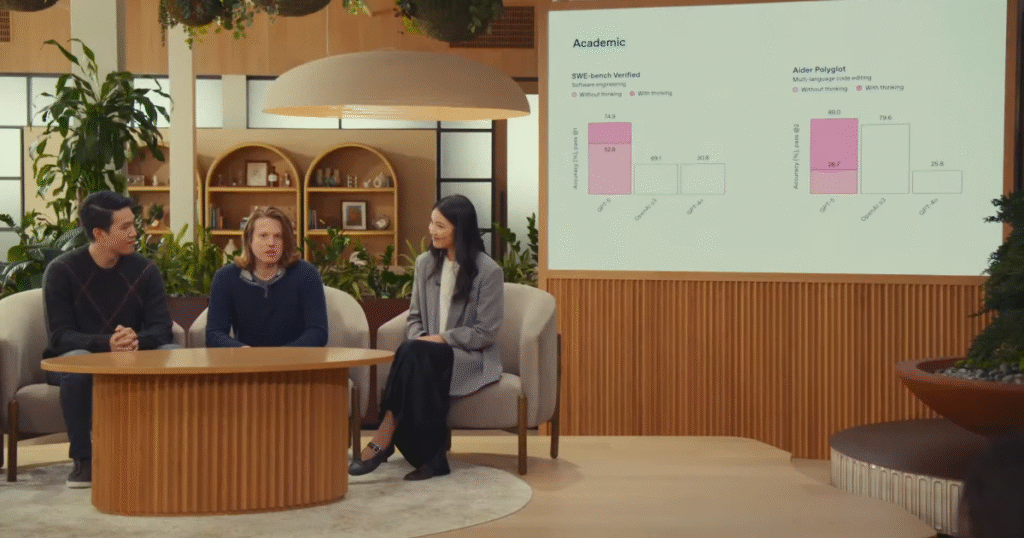

With this chart, OpenAI showed onstage that one of GPT-5’s scores is lower than o3’s but is shown with a bigger bar. In this same chart, o3 and GPT-4o’s scores are different but shown with equally-sized bars. It was bad enough that CEO Sam Altman commented on it, calling it a “mega chart screwup,” though he noted that a correct version is in OpenAI’s blog post.

An OpenAI marketing staffer also apologized, saying, “We fixed the chart in the blog guys, apologies for the unintentional chart crime.”

On Friday, in response to a Reddit user asking about the graphs, Altman said that “the numbers here were accurate but we screwed up the bar charts in the livestream overnight; on another slide we screwed up numbers.” He also noted that the blog post and system card were “accurate” and said that “people were working late and were very tired, and human error got in the way. A lot comes together for a livestream in the last hours.”

It’s still not a great look for the company on its big launch day — especially when it is touting the “significant advances in reducing hallucinations” with its new model.

Update, August 8th: Added Reddit comment from Altman.