- Future AI memory chips could demand more power than entire industrial zones combined

- 6TB of memory in one GPU sounds amazing until you see the power draw

- HBM8 stacks are impressive in theory, but terrifying in practice for any energy-conscious enterprise

The relentless drive to expand AI processing power is ushering in a new era for memory technology, but it comes at a cost that raises practical and environmental concerns, experts have warned.

Research by Korea Advanced Institute of Science & Technology (KAIST) and Terabyte Interconnection and Package Laboratory (TERA) suggests by 2035, AI GPU accelerators equipped with 6TB of HBM could become a reality.

These developments, while technically impressive, also highlight the steep power demands and increasing complexity involved in pushing the boundaries of AI infrastructure.

You may like

Rise in AI GPU memory capacity brings huge power consumption

The roadmap reveals the evolution from HBM4 to HBM8 will deliver major gains in bandwidth, memory stacking, and cooling techniques.

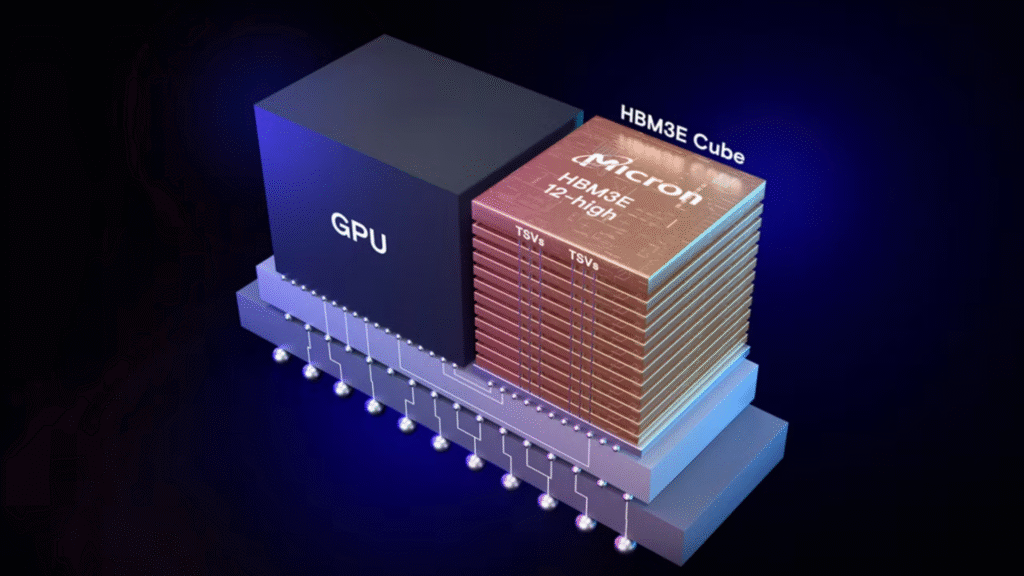

Starting in 2026 with HBM4, Nvidia’s Rubin and AMD’s Instinct MI400 platforms will incorporate up to 432GB of memory, with bandwidths reaching nearly 20TB/s.

This memory type employs direct-to-chip liquid cooling and custom packaging methods to handle power densities around 75 to 80W per stack.

HBM5, projected for 2029, doubles the input/output lanes and moves toward immersion cooling, with up to 80GB per stack consuming 100W.

However, the power requirements will continue to climb with HBM6, anticipated by 2032, which pushes bandwidth to 8TB/s and stack capacity to 120GB, each drawing up to 120W.

These figures quickly add up when considering full GPU packages expected to consume up to 5,920W per chip, assuming 16 HBM6 stacks in a system.

By the time HBM7 and HBM8 arrive, the numbers stretch into previously unimaginable territory.

HBM7, expected around 2035, triples bandwidth to 24TB/s and enables up to 192GB per stack. The architecture supports 32 memory stacks, pushing total memory capacity beyond 6TB, but the power demand reaches 15,360W per package.

The estimated 15,360W power consumption marks a dramatic increase, representing a sevenfold rise in just nine years.

This means that a million of these in a data center would consume 15.36GW, a figure that roughly equals the UK’s entire onshore wind generation capacity in 2024.

HBM8, projected for 2038, further expands capacity and bandwidth with 64TB/s per stack and up to 240GB capacity, using 16,384 I/O and 32Gbps speeds.

It also features coaxial TSV, embedded cooling, and double-sided interposers.

The growing demands of AI and large language model (LLM) inference have driven researchers to introduce concepts like HBF (High-Bandwidth Flash) and HBM-centric computing.

These designs propose integrating NAND flash and LPDDR memory into the HBM stack, relying on new cooling methods and interconnects, but their feasibility and real-world efficiency remain to be proven.